Reality Mode

Exercise real flows—not fixtures. Catch "green CI, broken humans" before release.

Classes of failure

40+

mock data, dead routes, auth gaps, secret leaks

Minutes to first scan

< 3

CLI init → ship check in your repo

Where it runs

CI · IDE · MCP

same rules from terminal to merge queue

Minutes to setup, not hours.

npx @guardrail/cli init

guardrail ship

Set and forget

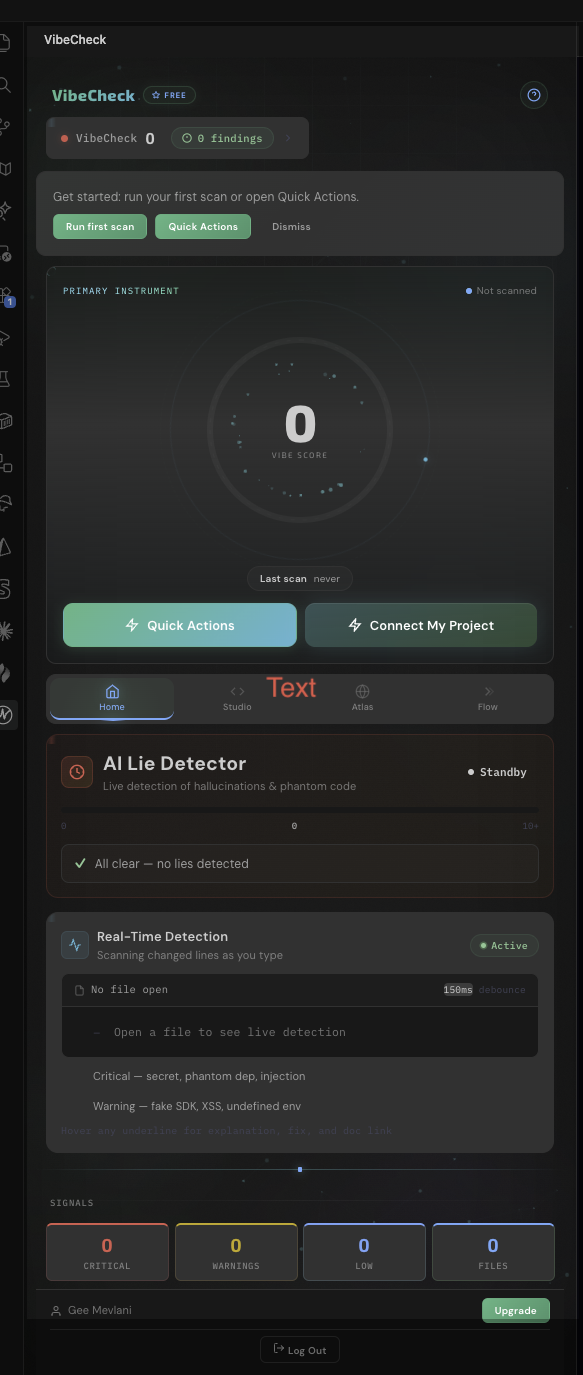

Product surface

Severity, paths, and policy status in one place—so AI-generated optimism doesn't outrun what production actually does.

Severity

Capabilities

Exercise real flows—not fixtures. Catch "green CI, broken humans" before release.

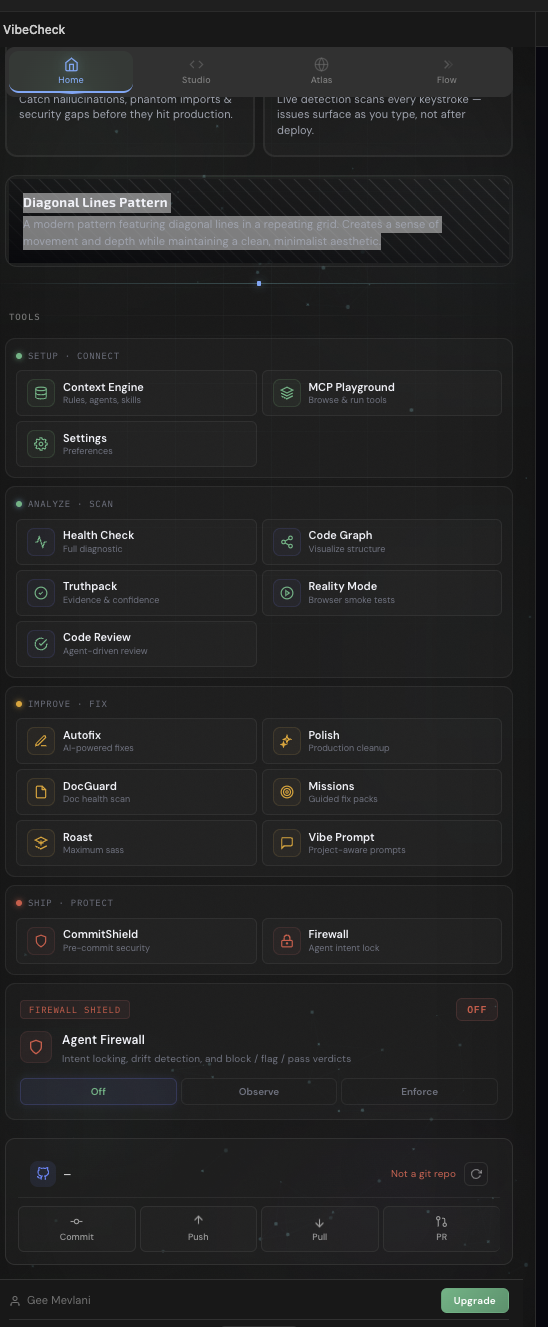

Guardrails inside Cursor, VS Code, and any MCP client.

Block merges on severity, tier, or org rules—automatically.

Mock data, dead routes, auth gaps, secret leaks—modeled for AI-built apps.

Same rules from terminal to merge queue—no drift between environments.

One command tells you if your app works—or only looks like it does.

PDFs and evidence trails your security team can file without rework.

The usual failure mode

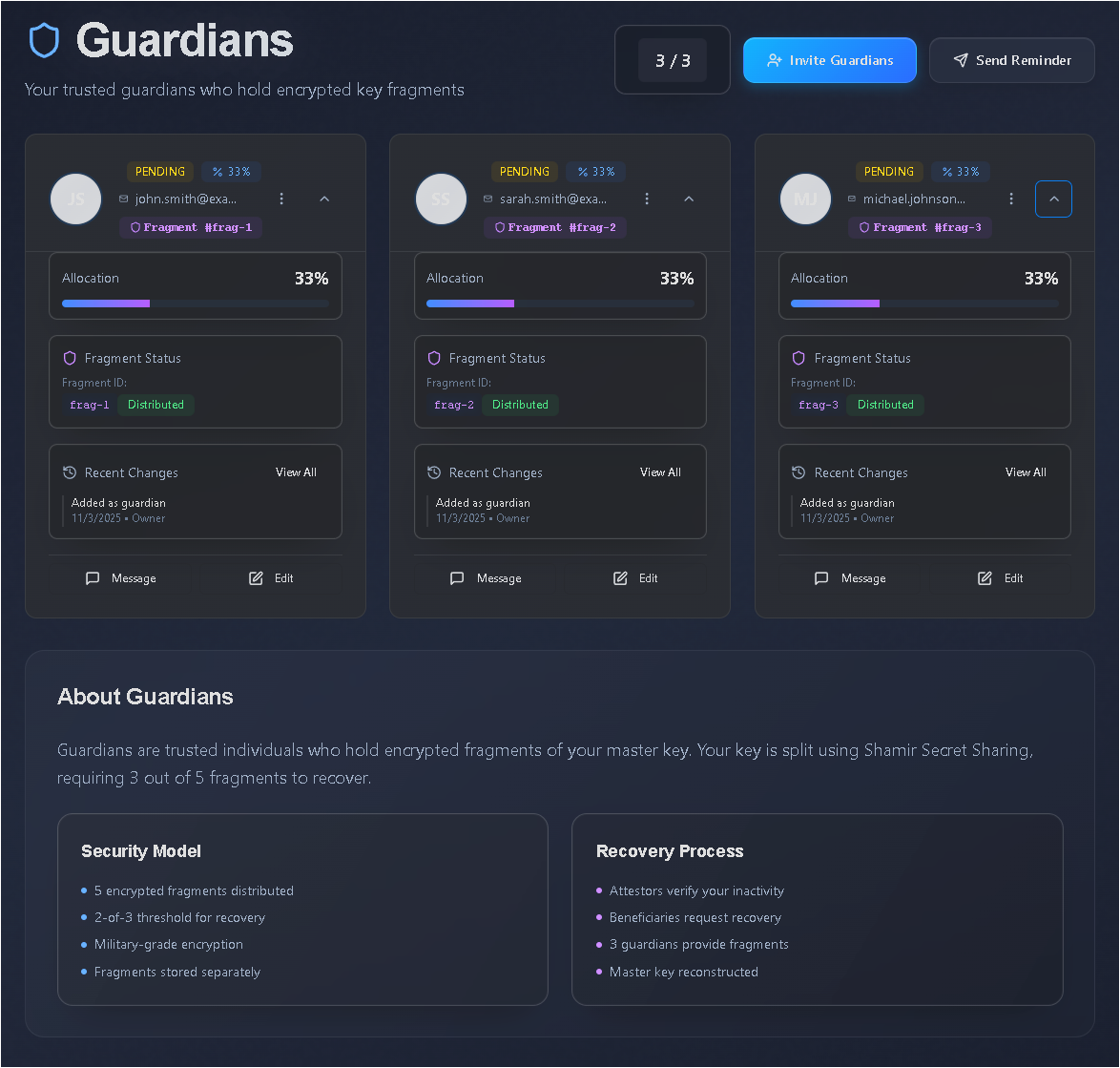

We model the hand-off from AI-generated optimism to what production actually does—so you fix it while it is still cheap.

Fast iterations, optimistic UI, synthetic data that "looks fine."

Unit mocks pass; integration is shallow; security lags behind vibe.

Reality gates, secret detection, and policy blocks on what matters.

Teams

Engineers: catch dead routes, mock data, and auth gaps before the merge queue—without another dashboard to babysit.

Security: exports and evidence that map to how the app actually behaves, not how the README claims it behaves.

Leads: one verdict on readiness—block deploys on severity, tier, or policy; same rules in CI and IDE.

Reality check

guardrail models the hand-off from AI-generated optimism to what production actually does. Reality Mode and ship checks catch fake features, shallow integration, and security drift while fixes are still cheap—then wire the same rules through CI, the IDE, and MCP so judgment doesn't vary by surface.

Real-time security scanning in your terminal

Reality check

Go beyond static scans—exercise real user flows before you tag a release.

Pricing

Same tiers as in-app. Upgrade when findings stop being hypothetical.

Severity counts & scans — findings blurred

1 project

Full findings — no auto-fix

3 projects

$96.00 billed yearly · save ~20%

Most teams start here

Auto-fix & automation

10 projects

$288.00 billed yearly · save ~20%

Frameworks & audit-ready

25 projects

$576.00 billed yearly · save ~20%

Cancel anytime. Questions? Talk to us.

Can't find what you need? Reach out to our team.

A tool that verifies your AI-built app actually works before you ship it.

Run one command. guardrail tests your app, finds issues, and tells you exactly what to fix.

Your code never leaves your machine. Everything runs locally.

Cursor, VS Code, Claude Desktop, Windsurf, and any MCP-compatible editor.

About two minutes. Run the init command and you're ready.

Yes. guardrail learns patterns across your repos.

Run npx @guardrail/cli init and get to a first ship check in under three minutes—local execution, no upload required.

One command surfaces broken auth, dead routes, mock data, and leaked secrets before users do—so "green CI" isn't lying to you.

Same rules in Cursor, VS Code, Claude Desktop, GitHub Actions, and any MCP client—no duplicate policy spreadsheets.

Your code stays local. Run scans and Reality checks without handing the repo to a black box you don't control.

Get started

Start free, wire CI, and let Reality Mode argue with your next deploy. Catch fake features and exposed secrets before your users do—same tiers in-app, upgrade when findings stop being hypothetical.